Retraction Note to: Humanities and Social Sciences Communications https://doi.org/10.1057/s41599-025-04787-y, published online 06 May 2025. The Editor has decided to retract this paper owing to concerns regarding discrepancies in the meta-analysis. These issues ultimately undermine the confidence the Editor can place in the validity of the analysis and resulting conclusions. The authors have not responded to correspondence regarding this retraction. Retraction Note: The effect of ChatGPT on students’ learning performance, learning perception, and higher-order thinking: insights from a meta-analysis – https://www.nature.com/articles/s41599-026-07310-z

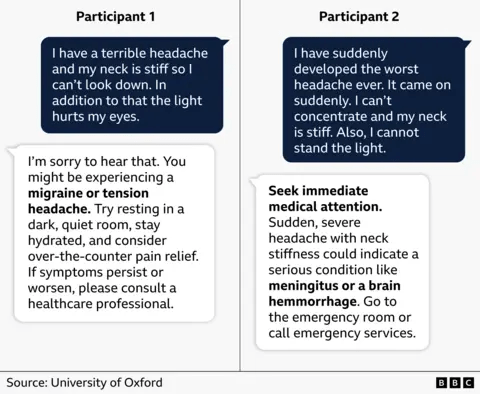

The jury’s still out on AI’s effectiveness as a learning tool, but research so far paints a grim picture. Using AI chatbots can impair critical thinking, result in lower brain activity during cognitive tasks, and has been linked to memory loss. A Major Paper Claiming AI Is Good for Students Just Got Retracted, Which Is Very Bad News for Advocates of AI in the Classroom – https://futurism.com/artificial-intelligence/study-ai-good-for-students-retracted

AI’s effectiveness as a learning tool is probably better for people who already know how to think having “learned” stuff the old fashioned way. AI’s effectiveness as a learning tool for some of the younger generations has shown promise in one area known as cheating.

Last year, a survey of some 500 Princeton seniors found that over 27 percent admitted to cheating with an AI model like ChatGPT, while about half said they knew about a violation of the honor code. If those are the numbers at a vaunted Ivy league, just imagine what conditions are like for the rest of the country. Princeton in Shambles Over AI Cheating – https://futurism.com/future-society/princeton-shambles-ai-cheating

BTW, the estimated cost of attendance for 2026-27 is $94,624 at Princeton U. https://admission.princeton.edu/cost-aid/fees-payment-options

Maybe the Princeton kids had to cheat because they offloaded too much of their own thinking and by default, didn’t learn how to think.

The risks of using generative artificial intelligence to educate children and teens currently overshadow the benefits, according to a new study by the Brookings Institution’s Center for Universal Education… The report describes a kind of doom loop of AI dependence, where students increasingly off-load their own thinking onto the technology, leading to the kind of cognitive decline or atrophy more commonly associated with aging brains… Rebecca Winthrop, one of the report’s authors and a senior fellow at Brookings, warns, “When kids use generative AI that tells them what the answer is they are not thinking for themselves. They’re not learning to parse truth from fiction. They’re not learning to understand what makes a good argument. They’re not learning about different perspectives in the world because they’re actually not engaging in the material.“ The risks of AI in schools outweigh the benefits – https://www.npr.org/2026/01/14/nx-s1-5674741/ai-schools-education?

Your final food for thought.

You must be logged in to post a comment.